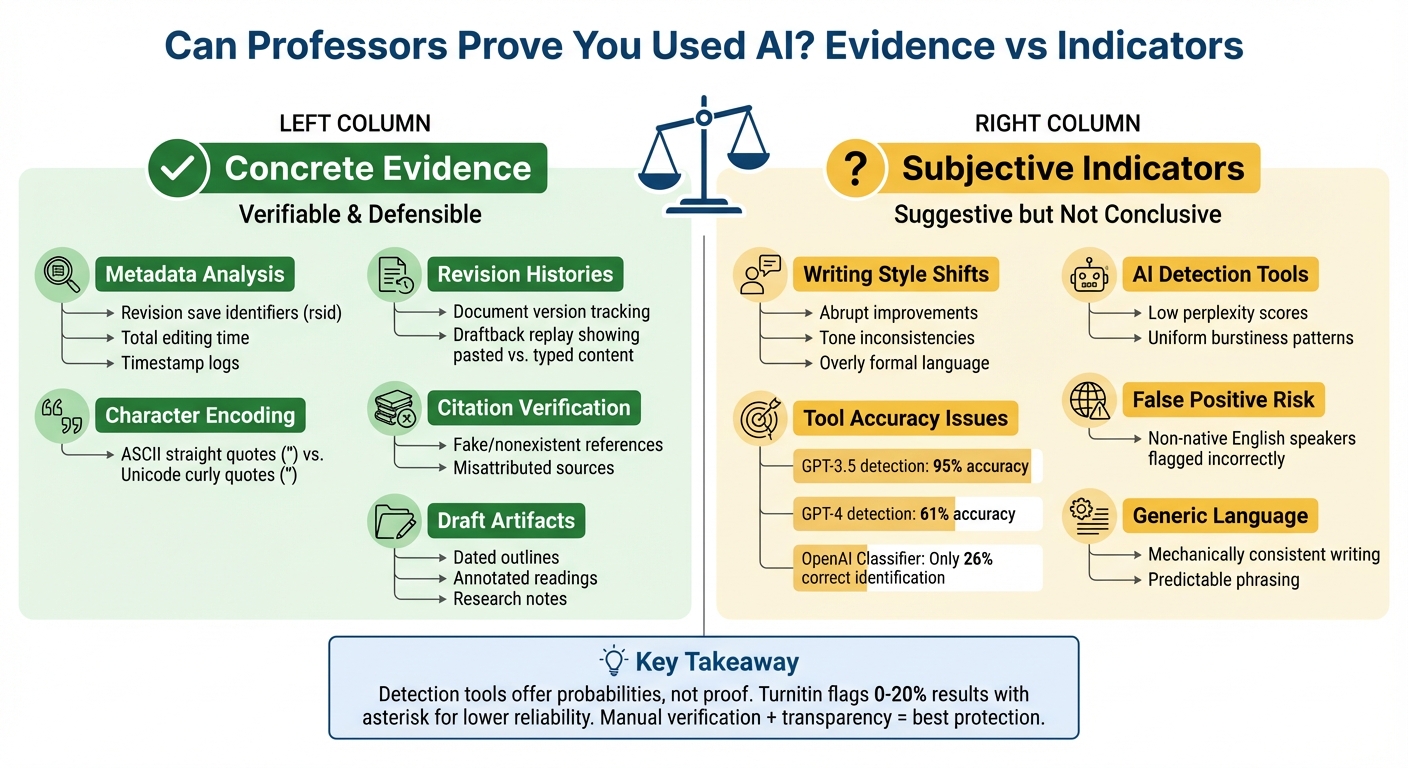

Can Professors Prove You Used AI? Evidence vs Indicators

When it comes to detecting AI use in academic work, professors rely on two approaches: concrete evidence and subjective indicators. The former includes verifiable proof like metadata, revision histories, or syntactic traces (e.g., straight vs. curly quotation marks). The latter involves stylistic patterns like overly polished text or abrupt improvements in writing quality, which are harder to prove conclusively.

Key points:

- Concrete evidence: Metadata, drafts, revision histories, or AI-specific traces in text.

- Subjective indicators: Writing style shifts, low perplexity, or high predictability flagged by AI detection tools.

- Detection tools: Often unreliable, with accuracy varying widely across models and prone to false positives.

- Manual review: Professors compare past work, verify citations and use a plagiarism checker, and engage students directly to clarify authorship.

- Ethical AI use: Many universities now require students to disclose AI writing aids and follow specific guidelines.

The bottom line: AI detection tools and subjective indicators alone aren't enough to prove misconduct. Verifiable evidence and transparency about your writing process are critical to avoid issues. Save drafts, explain your work, and always check course policies on AI usage.

AI Detection in Academia: Evidence vs Indicators Comparison Chart

How Professors Can Check for AI Cheating in a Sensible Way

Using AI writing assistants responsibly can help students maintain academic integrity while improving their work.

sbb-itb-1831901

How Automated AI Detection Tools Work

AI detection tools operate by analyzing text at a granular level, using token-based statistical methods similar to those employed by large language models (LLMs). This allows them to identify patterns that might indicate machine-generated content rather than human writing.

Detecting Perplexity and Burstiness

Automated tools rely on metrics like perplexity and burstiness to identify AI-generated text. Perplexity measures how predictable a piece of text is. AI-generated content often has low perplexity because LLMs are designed to select the most statistically probable next word, making the text highly predictable. On the other hand, human writers tend to use more varied and less predictable phrasing, which results in higher perplexity scores.

Burstiness, meanwhile, examines sentence length and structural variation. Human writing naturally alternates between long, complex sentences and shorter, snappier ones, creating a rhythm that AI-generated text often lacks. Many students use AI writing tools to overcome writer's block and generate these initial structural ideas. AI writing tends to be more uniform, with consistent sentence structures and lengths. Tools like GPTZero analyze these "unnatural" patterns and stylistic consistencies to flag potential AI involvement.

Reports and Scoring Systems

When educators or others use AI detection tools, they typically receive detailed reports with percentage scores indicating the likelihood of AI involvement. These reports can highlight specific sentences or provide an overall document rating. For instance, GPTZero offers a "Writing Report" feature that even includes a video replay of the writing process, while Turnitin integrates directly with platforms like Canvas for seamless use.

Reliability Issues with Detection Tools

The reliability of AI detection tools varies widely, depending on the AI model that generated the text. A study by Walters at Manhattan College tested 16 detectors on 126 documents (42 each from GPT-3.5, GPT-4, and human authors). Some tools, like Copyleaks and Turnitin, achieved perfect accuracy across all documents. In contrast, the OpenAI Text Classifier correctly identified only 78% of papers and returned an "uncertain" result for 17%.

Detection tools also struggle with newer AI models. While many tools achieved 95% accuracy detecting GPT-3.5 content, their accuracy dropped to 61% for GPT-4 text. Simple editing tactics, such as swapping synonyms, personalizing AI output, or using machine translation, further reduce detection accuracy. A December 2023 study published in the International Journal for Educational Integrity tested 14 tools and found them to be "no better than random classifiers" when analyzing manipulated AI text.

"The available detection tools are neither accurate nor reliable and have a main bias towards classifying the output as human-written rather than detecting AI-generated text."

– International Journal for Educational Integrity

Even developers of these tools acknowledge their limitations. GPTZero, for example, warns users that its results "should not be used to punish or as the final verdict". Similarly, Grammarly notes that its AI detection score "cannot provide a definitive conclusion". False positives are a particular concern, especially for non-native English speakers. Their structured and predictable writing style can trigger AI flags, even when the work is entirely human-authored. These challenges underscore the importance of supplementing automated reports with manual reviews.

Manual Review Strategies by Professors

Professors often turn to manual review strategies to detect AI-generated content, providing a hands-on approach that complements automated tools. These methods are often more dependable than software-based detection, especially given the limitations of AI detectors already discussed.

Comparing Writing Styles Over Time

One effective strategy involves analyzing a student's "linguistic fingerprint" - the unique patterns in their word choice, sentence structure, punctuation habits, and use of functional words. Stylometric analysis allows professors to compare a student's current work with past submissions to identify sudden, unexplained shifts in writing sophistication.

Character encoding is another clue. For instance, a mix of straight (") and curly (“) quotation marks can signal AI-generated content that has been copied and pasted.

In early 2024, researcher Andy Buschmann applied this technique in an upper-level undergraduate writing class. By spotting stylistic inconsistencies and irregular character encodings - such as ASCII quotation marks - he flagged students who had used AI without acknowledgment. After presenting the students with evidence of "AI traces" in their work, all flagged individuals admitted to using AI tools without proper disclosure.

"Stylistic inconsistencies and irregular character encodings were telltale signs of AI involvement." – Andy Buschmann, Author and Educator

Professors also look out for abrupt changes in tone or formality. AI often produces overly formal or mechanically consistent writing, which can stand out against a student's natural, conversational style. For example, a student known for casual writing who suddenly submits a highly polished, formal academic paper might raise suspicion. Using these manual techniques, college instructors can identify AI-generated essays about 70% of the time.

These observations naturally lead to direct engagement with students to confirm the authenticity of their work.

Engaging Students Directly

If a submission appears inconsistent with a student’s usual work, professors increasingly turn to oral exams or discussions to verify authorship. These interactions help determine whether a student’s verbal explanations align with the complexity of their written work.

Instead of focusing solely on the final product, professors ask process-oriented questions. They might inquire about the student’s research methods, source selection, or interpretation of specific data or quotes. Questions such as "What was the most challenging part of researching this topic?" or "Why did you choose this particular source?" are designed to gauge authentic understanding.

Vocabulary and jargon are another focus. Professors may ask students to define advanced terms or concepts used in their papers. For instance, if a student cannot explain a term like "axiomatic" or elaborate on a theory they cited, it raises serious doubts. Additionally, professors often request "artifacts" from the writing process, such as dated outlines, annotated readings, or rough drafts. These materials provide insight into how the work developed over time.

While these methods assess a student’s understanding, verifying the accuracy of citations offers another layer of scrutiny.

Citation Checks and Verification

Fake citations are a glaring sign of AI-generated content. Generative AI frequently creates citations that either don’t exist or misattribute sources. It may also misquote texts or fabricate quotes entirely.

To catch these issues, professors manually verify citations using academic databases. Nonexistent references are a strong indicator of AI involvement. Additionally, professors check whether the cited sources actually support the claims made in the paper. AI often includes irrelevant but convincing-looking citations.

"Generative AI will often make up citations, misquote sources, and make other mistakes in attributing work that are obvious to spot." – Bradley Emi, Pangram

Falsifying research sources is a clear violation of academic integrity, regardless of whether AI was involved.

Metadata and Document History as Evidence

In addition to analyzing writing style and discussing directly with students, professors can turn to the digital footprints embedded in documents. These traces can reveal when, where, and how a piece of writing came together.

Using Drafts and Revision Histories

Modern word processors like Google Docs and Microsoft Word automatically log timestamped changes, offering a detailed view of how a document evolves over time. Genuine writing typically involves numerous small edits - corrections, rewording, and gradual progress.

However, if a large, polished block of text appears in a single revision, it raises suspicion. Tools like Draftback allow educators to replay the document's creation process, showing whether the content was typed progressively or pasted all at once [18, 20]. Grammarly's "Authorship" feature, designed to track document history and detect pasting patterns, reported over 2 million usage cases within just two months of its 2024 launch.

"A quick look at a version history can reveal whether a huge chunk of writing was suddenly pasted in from ChatGPT or other chatbot." – Jeffrey R. Young, Editor, EdSurge

Microsoft Word files also carry hidden metadata, such as revision save identifiers (rsid), embedded in their XML code. Each editing session generates a unique rsid tag. For example, a 2,000-word essay with only one or two rsid values strongly suggests that the content was pasted in a single action rather than written organically [21, 22]. Metadata further reveals metrics like total editing time - if a lengthy paper shows only a few minutes of active work, it’s another clear warning sign [17, 22].

These metadata insights often lead directly to additional clues about unusual content creation methods.

Spotting Wholesale Content Pasting

Beyond revision histories, other text markers - like character encoding - can serve as evidence. AI models are generally trained on text formatted in ASCII, which uses "straight" quotation marks (") and apostrophes ('). Meanwhile, modern word processors default to Unicode, producing typographic or "curly" quotes (e.g., “ ”).

In 2024, researcher Andy Buschmann applied this technique in an undergraduate writing class. By scanning for ASCII-style quotation marks - common in AI-generated text but rare in human-produced documents - he identified students who had relied on AI tools without acknowledgment. When confronted with this evidence, every flagged student admitted to using AI.

"ASCII quotation marks usually map to single tokens, whereas typographic quotation marks (e.g., curly quotes) require multi-byte encoding... This increases token count and computational cost [for LLMs]." – Andy Buschmann

Together, metadata like revision histories and technical details like character encoding provide solid, verifiable evidence to support other detection strategies.

Ethical Use of AI Writing Tools

Using AI responsibly in academic settings means balancing its potential with a commitment to integrity. Ethical use revolves around who controls the writing process. If you draft the content yourself and use AI for tasks like grammar checks or brainstorming, you maintain academic integrity. However, relying on AI to produce large portions of text is closer to ghostwriting, which raises ethical concerns.

AI-Assisted Writing vs. AI-Generated Writing

Studies show that about 30% of college students use generative AI for academic writing support. The distinction between AI-assisted and AI-generated writing is crucial. AI-assisted writing involves drafting your own content and using tools like Yomu AI for refinement - such as improving grammar, reworking sentence flow, or formatting citations. On the other hand, AI-generated writing means the AI creates most of the text, with minimal human input.

Many institutions allow AI for idea generation and language support but consider unaltered AI-generated content a violation of academic policies. For example, at Stanford University, unless explicitly allowed by the instructor, using generative AI is treated the same as receiving unauthorized help from another person. Even when students attempt to modify AI-generated text, detection tools can often identify its origins.

Disclosing AI Involvement

By 2025, 69% of U.S. universities had formal policies on AI use. These often require students to disclose any AI assistance when permitted. For instance, the University of Melbourne mandates that AI usage "must be appropriately acknowledged and cited" in line with their assessment policies.

"Without a clear instructor directive, use of or consultation with generative AI shall be treated analogously to assistance from another person." – Office of Community Standards (OCS), Stanford University

Always check your course syllabus before using AI tools, as policies can vary even within the same university. What might be acceptable in one class could breach academic rules in another. These guidelines highlight the importance of understanding and adhering to specific course-level expectations.

Tips for Responsible AI Use

To ensure your work remains your own, start drafting offline before using AI for fine-tuning. This way, the core ideas and arguments are entirely yours. Use Yomu AI’s paraphrasing or enhancement tools only after completing your initial draft, not as a shortcut for the writing process.

Maintain a record of your work. Save dated outlines, research notes, and document versions to demonstrate authenticity if questioned. Double-check any citations manually - AI tools can sometimes produce incorrect or incomplete references. Incorporate personal insights and specific examples that AI cannot replicate. Above all, be prepared to explain and defend your arguments, sources, and conclusions in detail.

Conclusion: Navigating AI Use in Academia

The distinction between proving AI use and merely suspecting it hinges on evidence versus indicators. Solid evidence - like drafts, revision histories, or research logs - shows how your work evolved, while subjective signs, such as abrupt style changes or overly generic language, only suggest possible AI involvement. As Bradley Emi from Pangram explains, "A positive AI detection is just the beginning of the conversation, and can never stand alone when punitive action against a student is being considered".

Detection tools, while helpful, offer probabilities rather than absolute proof. For example, OpenAI's original classifier identified only 26% of AI-generated text correctly. Similarly, Turnitin flags results between 0% and 20% with an asterisk, signaling lower reliability. These tools are useful for spotting potential issues, but they shouldn't be the sole basis for accusations of academic misconduct.

The best way to protect yourself is by documenting your writing process. Save dated outlines, annotated PDFs, and version histories from platforms like Google Docs or Word. Be ready to explain your claims, define key terms, and walk through your methodology if questioned. If you can't confidently explain and defend your submission, you could be putting yourself at risk.

When using tools like Yomu AI, transparency is essential. Follow your course's policies on AI use, and remember that unless explicitly approved by your instructor, AI assistance is often considered similar to help from another person. Use AI to fine-tune grammar or improve sentence flow, but avoid relying on it to craft central arguments or entire sections.

At its core, academic integrity isn't about rejecting technology - it’s about ensuring that you remain the author of your ideas. AI can be a helpful tool, but it should support your thinking, not replace it. By using AI responsibly, you can maintain ownership of your work while benefiting from its capabilities.

FAQs

What counts as real proof that I used AI?

Real examples of AI usage come from clear evidence like plagiarism detection reports, metadata analysis, or identifying specific text patterns during an investigation. While things like uneven writing style or text that feels overly refined might spark suspicion, they aren't concrete proof on their own.

Can AI detectors alone get me in trouble?

AI detectors are helpful tools but not entirely dependable, as they can produce both false positives and negatives. Professors often rely on a combination of methods to verify AI usage. These might include examining stylistic inconsistencies, checking metadata, and comparing the work to a student's previous submissions. While detectors can highlight potential concerns, they don't provide conclusive evidence and shouldn't be used as the only basis for accusations. Relying too heavily on them can lead to unfair judgments if not applied carefully.

How can I protect myself if I used AI ethically?

To use AI responsibly, it's important to be upfront about incorporating AI tools and to understand their limitations. Keep a detailed record of your process, including drafts and notes, to show the effort and thought behind your work. Make sure to follow your institution's guidelines for AI usage, and be prepared to explain how you used these tools. Documenting your work and showing how AI contributed can help demonstrate that you’ve used it responsibly and ethically.