AI Abstract Generators: When They Help and When They Weaken a Paper

AI abstract generators can save time and improve clarity, but they come with risks. These tools, powered by large language models, create summaries of research papers in seconds. They’re widely used in academia, especially by non-native English speakers, to draft abstracts quickly and ensure proper structure. However, over-reliance on these tools can lead to oversimplified language, factual inaccuracies, and a loss of critical writing skills.

Key insights:

- Benefits: Speeds up writing, ensures clear structure, and supports non-native English writers.

- Drawbacks: Risks include generic phrasing, inaccuracies in complex topics, and reduced skill development.

- Best Practices: Treat AI outputs as drafts, verify all claims, and refine for accuracy and depth.

AI tools like Yomu AI are helpful starting points, but human judgment is essential to maintain research quality.

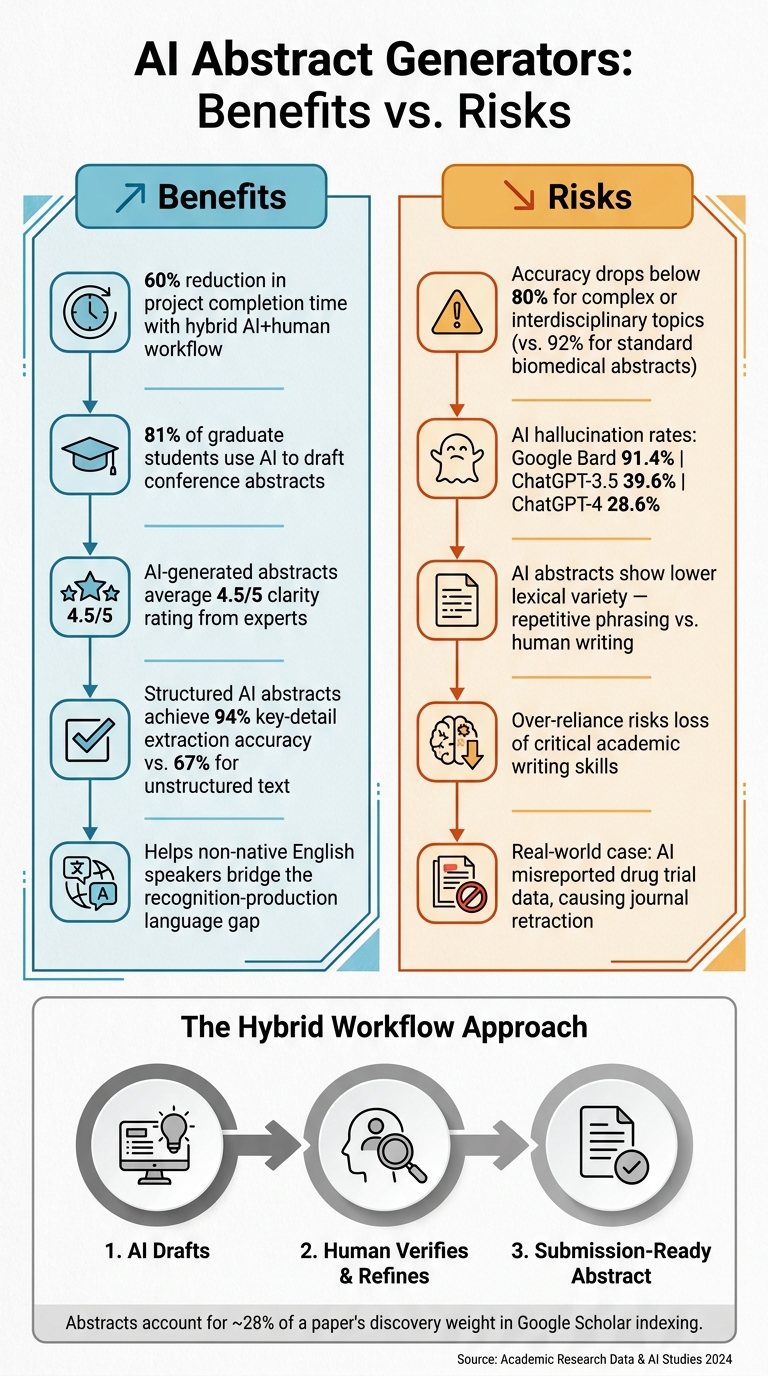

AI Abstract Generators: Benefits vs. Risks with Key Statistics

Benefits of AI Abstract Generators

Saving Time in the Writing Process

AI abstract generators are a huge time-saver, especially for researchers balancing multiple responsibilities like data collection, peer review, and teaching. These tools can help overcome writer's block by providing a starting point, offering an initial structure that can be refined later. A hybrid approach - where AI drafts the first version and a human polishes it - can reduce project completion time by up to 60% compared to starting from scratch.

In fact, 81% of graduate students report using AI specifically to draft conference abstracts.

"AI tools can streamline certain writing tasks, freeing time for deeper thinking, data collection, or other research activities that cannot be automated." - Daniel Felix, Author

Producing Clear and Well-Structured Abstracts

A strong abstract typically includes four key components: purpose, methods, results, and conclusions. However, many researcher-written abstracts blur these sections or leave one out entirely. AI tools excel at enforcing this structure, ensuring clarity and completeness.

Studies show that AI-generated abstracts receive an average clarity rating of 4.5/5, with experts sometimes finding them clearer and more complete than those written by researchers themselves. Additionally, structured abstracts with labeled sections like "Methods" and "Results" make it easier to extract key details, achieving 94% accuracy, compared to just 67% for unstructured text.

This improved structure also makes abstracts more accessible to readers from diverse linguistic backgrounds.

Supporting Non-Native English Speakers

For non-native English speakers, writing clear and polished abstracts can be particularly challenging. Many face what’s known as the recognition and production gap - the ability to identify awkward phrasing without having the vocabulary to fix it.

AI tools help bridge this gap by allowing researchers to draft in straightforward language and then refine the tone, grammar, and phrasing to meet academic standards. Importantly, this process ensures the core meaning remains intact. Dr. Ahmed Khan, a Public Health Researcher at the University of Toronto, shared his experience:

"As a non-native English writer, AI helps level the playing field. I still review every sentence, but it helps me avoid the language barriers that previously limited where I could publish."

sbb-itb-1831901

Limitations and Risks of AI Abstract Generators

Risk of Generic or Oversimplified Abstracts

AI abstract generators often produce text that feels polished but lacks the nuanced understanding required in specialized fields. This happens because these tools rely heavily on pattern recognition, leading to outputs that follow predictable structures but lack depth. For instance, a study comparing 150 human-written abstracts with 150 AI-generated ones revealed that the AI versions had lower lexical variety. They leaned on repetitive phrases and standard academic frameworks, missing the richness and adaptability seen in human writing.

"Relying solely on AI will result in surface-level understanding, weakening the quality of your academic work." - Oxford LibGuides

This becomes a significant issue in areas where precise terminology is non-negotiable. Without the ability to grasp the intricacies of specific disciplines, AI-generated abstracts may fall short of accurately representing complex ideas.

AI's limitations don’t stop at generic phrasing; they extend into handling complex topics as well.

Accuracy Problems with Complex Topics

When tasked with summarizing intricate subjects, AI tools often stumble. While advanced large language models (LLMs) have shown impressive accuracy rates - up to 92% for biomedical abstracts - this figure drops to below 80% when dealing with more challenging or interdisciplinary material. These errors can manifest as misreported statistical data, omitted study limitations, or exaggerated claims of significance.

One particularly troubling issue is hallucination, where AI fabricates data or citations. For example, hallucination rates have been reported at 91.4% for Google Bard, 39.6% for ChatGPT-3.5, and 28.6% for ChatGPT-4. In one alarming case, an AI-generated summary of a drug trial misreported adverse event rates, leading to widespread misinformation and forcing a scientific journal to issue a retraction.

"AI summarizers often overstate study significance, risking misinformation among non-experts." - Inside Higher Ed

Such inaccuracies highlight the dangers of using AI-generated abstracts without thorough human review.

Over-Reliance and Loss of Writing Skills

While AI-generated abstracts can save time, excessive reliance on them poses a threat to the development of critical academic skills. Writing an abstract manually is more than just a task - it’s an intellectual exercise that deepens understanding and fosters critical thinking. Overuse of AI risks producing work that, while polished, lacks the originality and depth that academic reviewers value.

"Automated summaries may appear clear yet lack genuine scholarly depth." - Dr. Sam Taylor, Professor of Information Science

"The blind use of unverified AI text shows a lack of creativity, originality, independent reasoning, and intellectual depth, all critical skills in education and research." - Elizabeth Oommen George, Paperpal

Balancing the convenience of AI with the need for authentic scholarly engagement is essential to maintain the integrity and quality of academic work.

Write a PERFECT abstract for your research paper or thesis with AI ETHICALLY

Best Practices for Using Yomu AI's Abstract Features

Understanding potential risks is just the beginning; the real challenge lies in leveraging AI writing aids to improve your work effectively.

Editing AI Outputs for Accuracy and Originality

AI-generated abstracts are not ready for submission as-is. Yomu AI's abstract tools provide a solid foundation, but they still require your expertise to refine them. When reviewing, go beyond surface-level edits. AI often leaves behind recognizable patterns - a rigid structure, predictable phrasing, and repetitive transitions. These "AI fingerprints" can make the text feel less nuanced and less scholarly.

"What separates novice from expert users is their ability to critically analyze and strategically modify AI-generated text." - Dr. Sarah Ramirez, Director of Writing Studies, MIT

Accuracy is another critical area to address. Always verify factual claims. AI can misinterpret data, confuse statistical relationships, or overlook essential methodological details. Replace vague terms with precise information, such as "demonstrated a 23% improvement", to make your abstract clearer and more discoverable.

Treating AI Output as a Draft, Not a Final Product

Dr. Fatima Rahman, a neuroscientist at Johns Hopkins University, offers a helpful perspective on using AI tools:

"With AI assistance, I can generate a solid first draft in hours, which gives me more time to refine the science rather than struggling with the writing process."

Adopting this mindset - viewing AI output as a starting point rather than a finished product - can transform how you approach your writing. After completing your paper, provide Yomu AI with detailed instructions, such as the target word count, journal requirements, and key findings. Use the generated draft as a framework, and focus on reshaping it to meet your standards, not just polishing it.

Once you've revised the draft, take a step back to evaluate its alignment with your research objectives.

Checking Alignment with Your Research Goals

After refining the AI-generated abstract, ensure it accurately represents your research. Does it reflect your methodology and conclusions without exaggerating them? AI tools sometimes lean toward overly confident language, which can present preliminary findings as definitive results.

Break the abstract into individual claims and cross-check each one against your paper. If a claim lacks a clear basis in your work, revise or remove it. Pay special attention to statistics and citations - never trust AI-generated references without confirming them manually. Remember, abstracts carry significant weight in discovery. In fact, they account for about 28% of a paper's discovery weight in Google Scholar's indexing algorithm. What you say in your abstract - and how accurately you say it - can directly influence how your research is found and cited.

Conclusion: Balancing AI Tools with Human Judgment

Key Takeaways

AI abstract generators like Yomu AI bring undeniable advantages: quicker drafts, organized structures, and helpful language support for non-native English speakers. However, they come with their own set of challenges, such as overly simplified language, fabricated citations, and a lack of depth that might undermine your research's value.

The numbers highlight this duality. While AI tools perform well in specialized fields, their limitations become more evident in interdisciplinary research. And when your abstract is the first impression for a journal editor or fellow researcher, those shortcomings can’t be ignored.

"The question isn't whether AI will be used for literature reviews, but how it will be used." - Dr. Marcus Wei, Research Methodologist, Oxford University

This reality points to the need for a balanced approach that uses AI for efficiency without sacrificing the quality and depth of scholarly work.

Finding the Right Balance

The best strategy is a hybrid workflow: let Yomu AI handle the initial draft - organizing ideas, refining language, and structuring content - while you take charge of verifying and enhancing the output. Dr. Hiroshi Tanaka, an immunologist at Kyoto University, captures this approach perfectly:

"The most valuable aspect for me isn't having AI write the paper - it's having a tireless collaborator who helps me clarify my thinking."

This mindset ensures you stay in control. AI can speed up the process, but every detail, claim, and citation still needs your scrutiny. In fact, major publishers like Nature, Science, and Elsevier now require authors to disclose any AI involvement in manuscript preparation. This policy reflects the research community's expectation that human accountability remains central to academic publishing.

When used thoughtfully, Yomu AI enhances your work without compromising its depth or integrity.

FAQs

How can I spot and fix hallucinated facts or citations in an AI-generated abstract?

To ensure the accuracy of information and citations, it's crucial to manually verify each source using reliable platforms like Google Scholar or official publisher websites. Pay close attention to potential red flags, such as references to authors, journals, or DOIs that don't exist. For factual claims, cross-check them against well-established academic sources. While automated tools can help streamline this process, manual verification remains the gold standard for maintaining credibility and trustworthiness in AI-generated abstracts.

When should I avoid using an AI abstract generator for my paper?

When originality, depth, or accuracy truly matter, relying on an AI abstract generator might not be the best choice. Situations that demand a precise understanding, critical analysis, or nuanced interpretations require a human touch to ensure your work stays authentic and accurately represents your research.

Do I need to disclose AI use when submitting an abstract to a journal or conference?

Yes, it's important to be upfront about using AI when submitting an abstract, especially if AI tools were involved in creating text, images, or tables. The degree of disclosure should align with how much AI contributed, as maintaining transparency is crucial in academic submissions.